ChatGPT and Search

Is there anything left to say?

When ChatGPT first appeared on the scene on November 30, 2022, I wrote:

Two phrases are worth highlighting from that tweet: "information/knowledge-seeking searches" and "real interactivity". Let's keep these phrases in the back of our minds, as we proceed to explore the question "What does ChatGPT mean for the future of Search?". Somewhat more narrowly — and, given Google's enormous footprint on the Search ecosystem, somewhat inevitably — this also morphs into the question "Is this a moment of significant vulnerability for Google?".

In this multi-part series, we'll explore these questions from various lenses. Spoilers alert: my take on the first question is "it's going to be a very exciting 2-3 years ahead", and my feeling about the second question is "very likely, but not necessarily for the reasons most people might think, and not one that Google is unprepared for".

Let's get started!

Lens 1: Search intents and the evolution of the Search UI

There's no simpler way to say this: Virtually every innovation in Search user experience came from Google.

In the beginning, the main user interface for Search comprised ten blue links produced by applying rudimentary information retrieval techniques using the words in a search query. Google's first innovation was PageRank, which, while it made the quality of the ranked set of results much better, was still very much within the framework of keyword-based ranking of webpages.

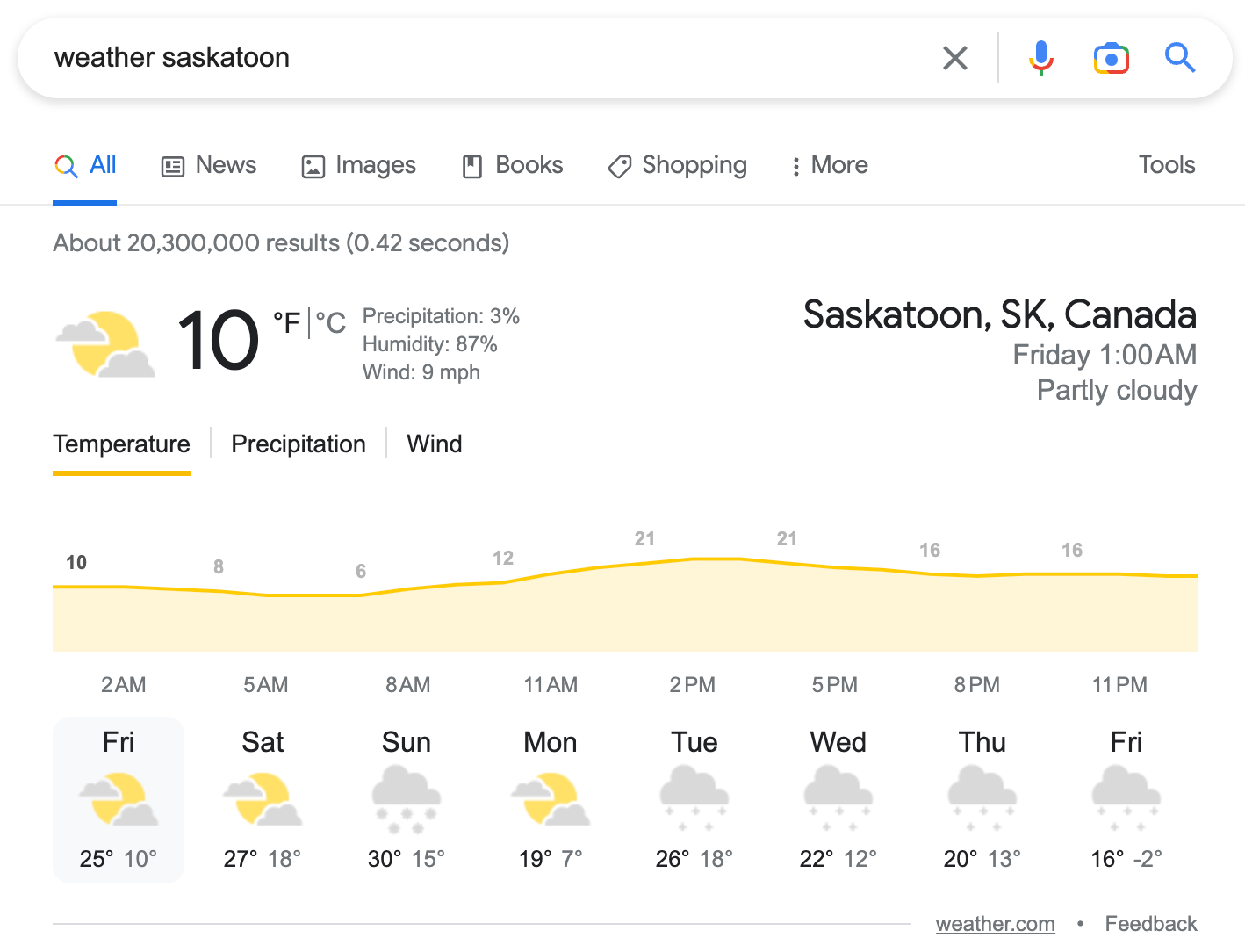

Between 2000 and 2010, Google introduced a variety of UI innovations that made users' life better — the most prominent ones were universal search, spell correction, auto-completions, and “one boxes”. Universal search blends search results from different “types” of results — images, videos, news, etc. — and is probably one innovation that Google “copied” from other search engines: the South Korean search engine Naver is often credited with this idea. Spell correction and auto-completions have been phenomenally successful tools that helped users formulate queries, including on topics they aren't very familiar with. One boxes are judiciously placed result units that directly give the answer to a simple user need with a richer UI than ten blue links — think simple search queries like "weather in chicago" or "msft stock" or "nfl scores", where a rich visual gives us what we're looking for without having to click on any link.

At the core of successful innovations like one boxes is a simple idea: isolate a class of search intents for which a different UI treatment will make users' life easier, then figure out the right way to deliver those results (e.g., sourcing data from authoritative entities).

A brief taxonomy of Search Intents

Search intents served by "one boxes" constitute a slice of what are traditionally known — within a trichotomy established by a landmark paper of Broder — as "informational queries". (The other two are navigational queries and transactional queries. Navigational queries are those asking the search engine to guide the user to a specific website (e.g., "delta" in the USA most commonly is a search query asking for the URL of the Delta Airlines website). The benchmark for search engines' performance on navigational queries is their ability to rank the desired site at the top position. More on transactional queries later in this series.)

A decade in the rearview mirror: NLP takes centerstage in Search

Informational queries come in a large variety — very fine-grained ones like wishing to know tomorrow's weather in a city to very broad ones like exploring a topic like, for example, carbon sequestration. In the decade from 2010-2020, Google made three substantial enhancements to its user experience/interface with respect to three large (occasionally overlapping) slices of user searches:

When the search query is the name of a (well-known) entity such as Beyonce or Jeff Bezos or the Hoover dam, Google returns a rich "knowledge panel" that gives an overview of that entity, along with key facts about that entity (e.g., date of birth, parents/siblings, for people; additional facts like net worth for people like Jeff Bezos about whom users often seek facts about their wealth; hours open, location, etc. for prominent places; and so on). Here Google anticipates a variety of intents the user might have when typing this entity's name into the search box — see, for example, below a segment of the knowledge panel for the search query “bezos”.

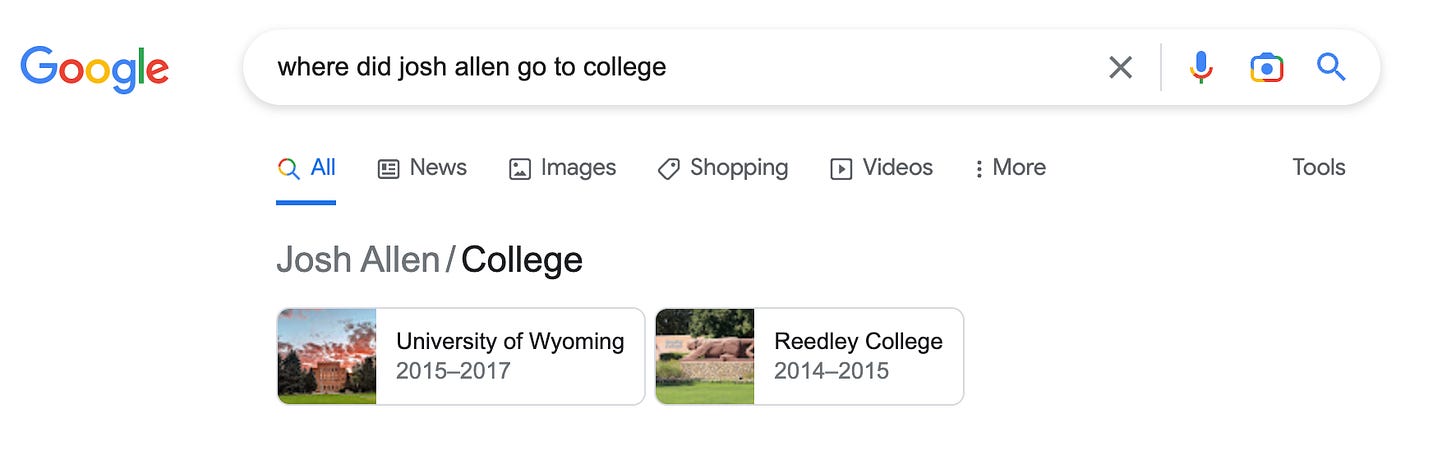

When the search query asks for some attribute of a well-known entity (e.g., Obama's birthplace, college attended by Josh Allen), Google's Knowledge Graph is consulted and a concise answer appears on the top of the search result page. Within this category of searches, a subtle shift has happened over the past decade: users no longer need to type awkward keyword phrases like "Obama birthplace" or "Josh Allen college" — instead they might actually type a more natural version of the same information need, e.g., "where was Michelle Obama born?" or "which college did Josh Allen attend?".

Google's ability to map these natural-language intents into simple Knowledge Graph lookups has steadily gotten better, especially as the deep natural language understanding revolution was ushered in by the invention of Transformers and other large language models. But let's not forget that Google had built these features — using conventional natural-language processing techniques — long before Transformers or other large language models were invented. In more recent years, Google has expanded the set of such answerable queries beyond the Knowledge Graph, and a search like "how many zip codes are there in the US?" results in the top result — still a blue link — accompanied by a large-font prominent search snippet that directly gives the answer the user seeks.

Things get significantly more interesting with the next class of (informational) search queries: in a feature called "Featured Snippets", Google inverted the "URL first, followed by Snippets" paradigm, and presented the snippet (a segment of the result page) in large font, and above the URL itself. This typically happens when you ask a "how" or "why" or "what is" question — e.g., "why is the sky blue", "how did george costanza get a job with the yankees", or "what is special about manuka honey?".

Featured Snippets are extremely useful to users in getting quickly to a succinct answer, along with a link to a good web page where that answer appears. As Google's deep NLP capabilities have blossomed, this feature has become enormously powerful and high-quality, for example, Google now has a phenomenal ability to understand that multiple high-quality sources are in agreement about the answer to a question.

A key theme in the segue from primitive one boxes to very powerful featured snippets is Google's evolution in terms of its ability to deal with natural-language search queries. This is something that Google led the industry in, and something that systems like ChatGPT share with Google.

Interactivity in Search: You don’t even realize you’re searching more!

A second silent revolution that has happened alongside the Knowledge Graph and NLP revolutions in Search is Google's ability to allow users to interact with the search engine. As users, sometimes we're in "quick-fact-seeking mode"; at other times, we're in "exploratory mode", where we are curious about a broad topic and wish to learn more than can be communicated with a single search query. Again, during the past decade, Google has made two tremendous innovations here:

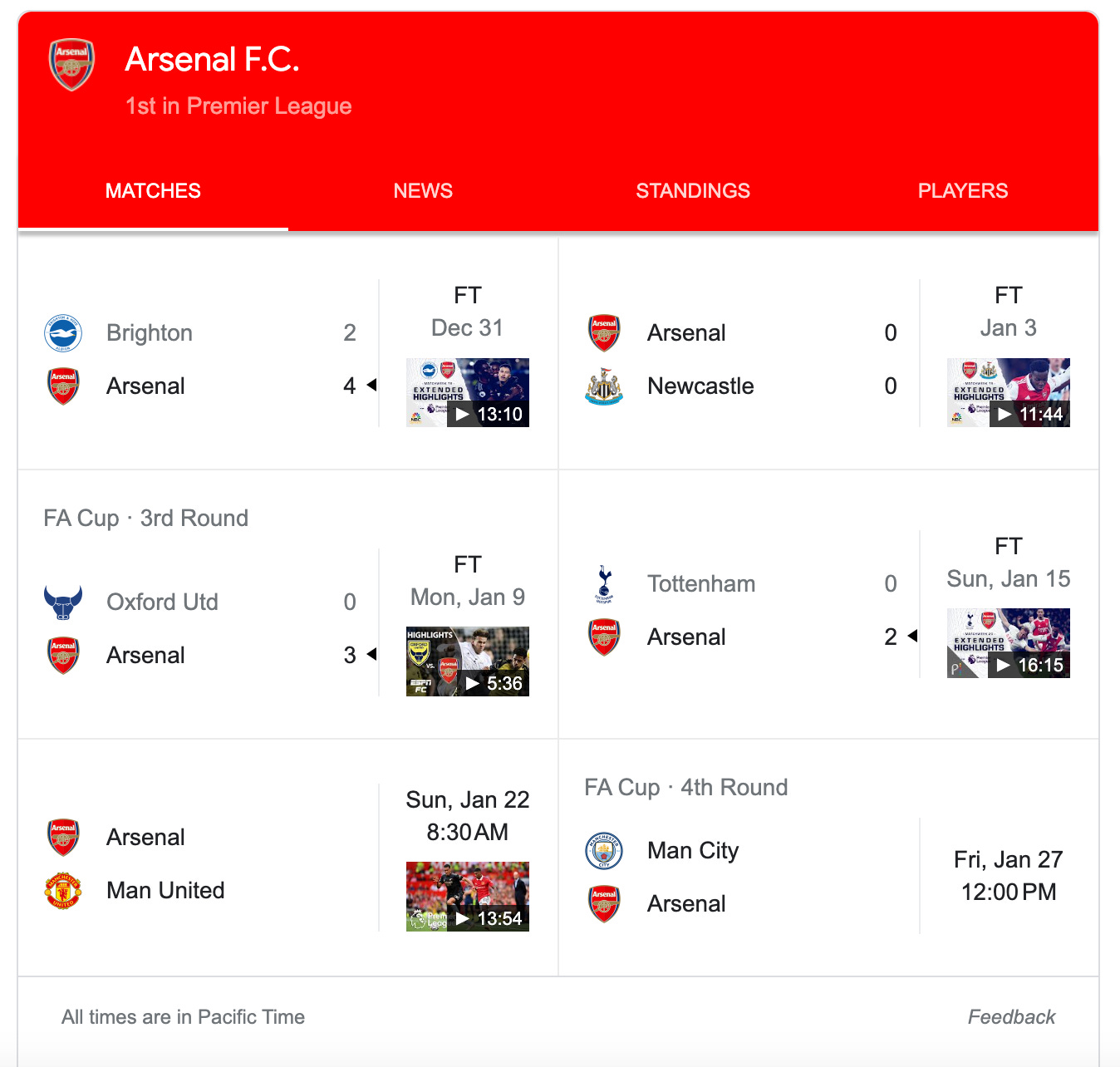

Powered by the Knowledge Graph, Google provides interactive (often live) results for a number of search queries, specifically queries about sports scores, weather, stock quotes, etc. The interactivity here is simple and delightful, and carried out through clicks / touches / swipes — starting with a simple intent to learn a football game score, I often type "arsenal fc" into Google, and before I realize it, I've spent more than ten minutes exploring the Premier League, consuming information about various matches, teams, players, etc., and often my clicks take me very far afield from where I started.

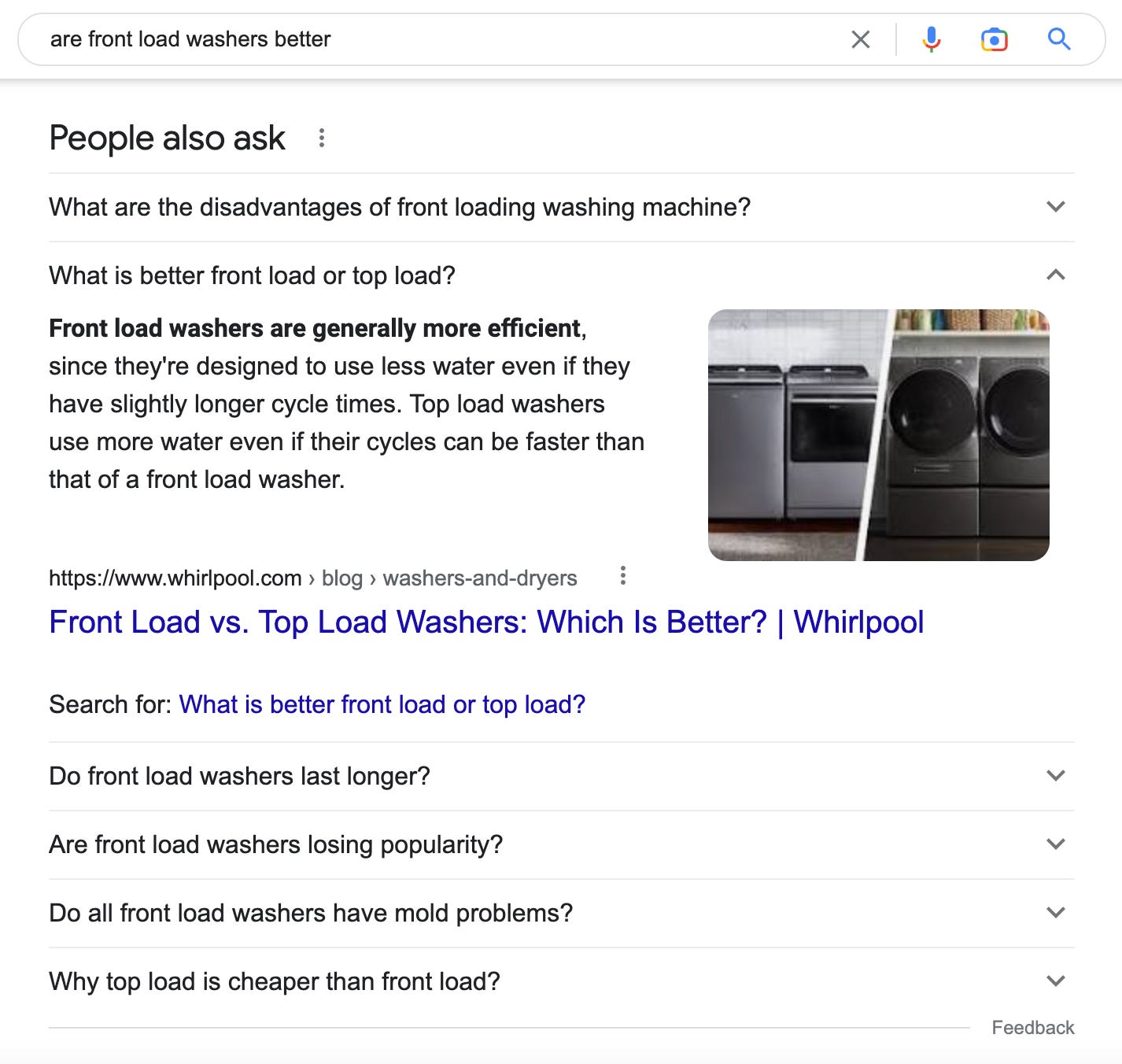

The second magical user experience comes in the "People also ask:" feature — when you start with a search that triggers a featured snippets (those what/why/how queries), you'll often see (below the top one or two results) a module that lists not search results but natural-language questions germane to the original question you typed. For example, if you start with "which ones are better, front loading or top loading washing machines?" you'll see a bunch of related questions like "why are top loaders cheaper?" or "why does my front loading washer smell funny?", and every click on one of these gives an in-situ featured snippet, along with a new set of related questions. One pleasing consequence of this journey is that within a few minutes, the user learns an enormous amount about a topic, especially answers to questions they didn't even think to ask!

Summary: Until late 2022, interactive browsing of the Knowledge Graph; and "Featured Snippets" and "People also ask", both powered by deep natural-language understanding; were the pinnacle of Search user experiences.

Until, that is, ChatGPT arrived.

More to come:

Lens 2: Fake threats and Real threats to Google's supremacy over the years.

Lens 3: Trust.

Lens 4: Antitrust.

Lens 5: The challenge ahead: Strings and Things, Take 2.

Lens 6: Commercial searches and monetization.